Driving Industrial Innovation Through Enhanced Data Clarity

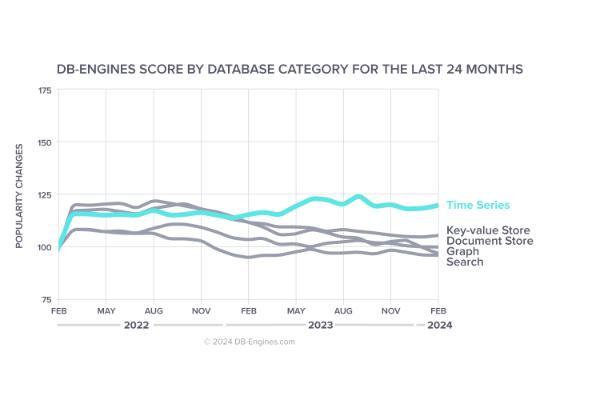

In the landscape of modern digital transformation, the ability to manage time-stamped information with precision is a core requirement for operational success. Forward-thinking enterprises often begin their journey by conducting a detailed time series database performance comparison to evaluate how different architectures handle high-concurrency writes and complex analytical queries. By selecting a system that minimizes resource consumption while maximizing throughput, organizations can build a resilient framework that supports real-time monitoring and historical deep-dives across thousands of industrial assets.

The Role of Architecture in Data Velocity

The fundamental challenge of industrial data lies in its relentless pace. Unlike traditional transactional data, time series data is cumulative and sequential, requiring a storage engine that can append information at lightning speed. Modern databases utilize specialized indexing structures that allow for rapid data location without the overhead typically associated with relational systems.

This structural efficiency ensures that even during massive data bursts—such as those triggered by system-wide sensor updates—the database remains responsive. By optimizing the way data is laid out on physical disks, these systems provide a stable environment for mission-critical applications that cannot afford even a millisecond of downtime.

Scalability and Resource Management

A primary goal for any data-heavy enterprise is achieving a balance between performance and cost. Distributed database systems allow for the seamless expansion of storage capacity by adding nodes to a cluster, ensuring that the infrastructure grows in lockstep with the business.

Intelligent Data Compression

Data compression is a vital component of efficient management. By utilizing algorithms specifically designed for numerical time series, databases can achieve significant reduction in storage footprints. This not only lowers hardware expenses but also speeds up query times, as less data needs to be read from the disk to provide a comprehensive answer.

High Availability and Disaster Recovery

For industries such as power generation or chemical processing, data loss is not an option. High-performance systems incorporate automatic replication and failover protocols. These features ensure that even in the event of a localized hardware failure, the system continues to operate, preserving the integrity of the data stream and ensuring continuous visibility for operators.

Practical Implementation of Data Best Practices

To extract the most value from an IoT ecosystem, it is essential to focus on time series database performance strategies that streamline the entire data pipeline. Effective partitioning of data by time intervals allows the system to quickly discard irrelevant information during a search, significantly boosting the speed of dashboards and reporting tools. When these practices are integrated into the core architecture, the result is a highly agile system capable of delivering insights in fractions of a second.

Integration with Modern Analytical Tools

The true power of a database is realized when its data is put to work. Modern time series solutions are designed to integrate seamlessly with visualization platforms and machine learning frameworks. This allows for the creation of sophisticated digital twins and predictive models that can identify potential equipment failures before they occur.

Simplifying the User Experience

Modern query languages have evolved to be both powerful and accessible. By offering SQL-like syntax alongside specialized time-based functions, these databases allow analysts to perform complex aggregations—such as calculating moving averages or identifying outliers—without writing hundreds of lines of code. This accessibility ensures that data insights are available to every level of the organization.

Edge-to-Cloud Synchronization

In many industrial scenarios, data is generated in remote or harsh environments. High-performance databases often include features for edge computing, allowing for local processing and filtered synchronization to a central repository. This reduces bandwidth costs and ensures that critical alerts are processed locally with zero latency, while the central cloud maintains the full historical record.

Ensuring Longevity with High-Performance Systems

Investing in a high performance time series database is a strategic move that prepares an organization for the next decade of industrial growth. As sensor density increases and sampling rates rise, the underlying data layer must be robust enough to handle the load without degradation. A high-performance foundation provides the confidence to scale operations, explore new service models, and maintain a leading position in an increasingly competitive global market.

Conclusion: Data as a Catalyst for Growth

The transition to a data-driven model is a continuous process of refinement and optimization. By prioritizing the efficiency and speed of the data storage layer, enterprises can unlock the full potential of their IIoT investments. Clear, accessible, and high-speed data serves as the catalyst for smarter decision-making, improved safety protocols, and enhanced product quality.

As we look toward the future of industry, the role of specialized time series technology will only become more central. Organizations that embrace these high-performance solutions today will be well-equipped to navigate the complexities of tomorrow, turning raw data into a powerful engine for innovation and sustainable growth.